ClawsBench: Evaluating Capability and Safety of LLM Productivity Agents

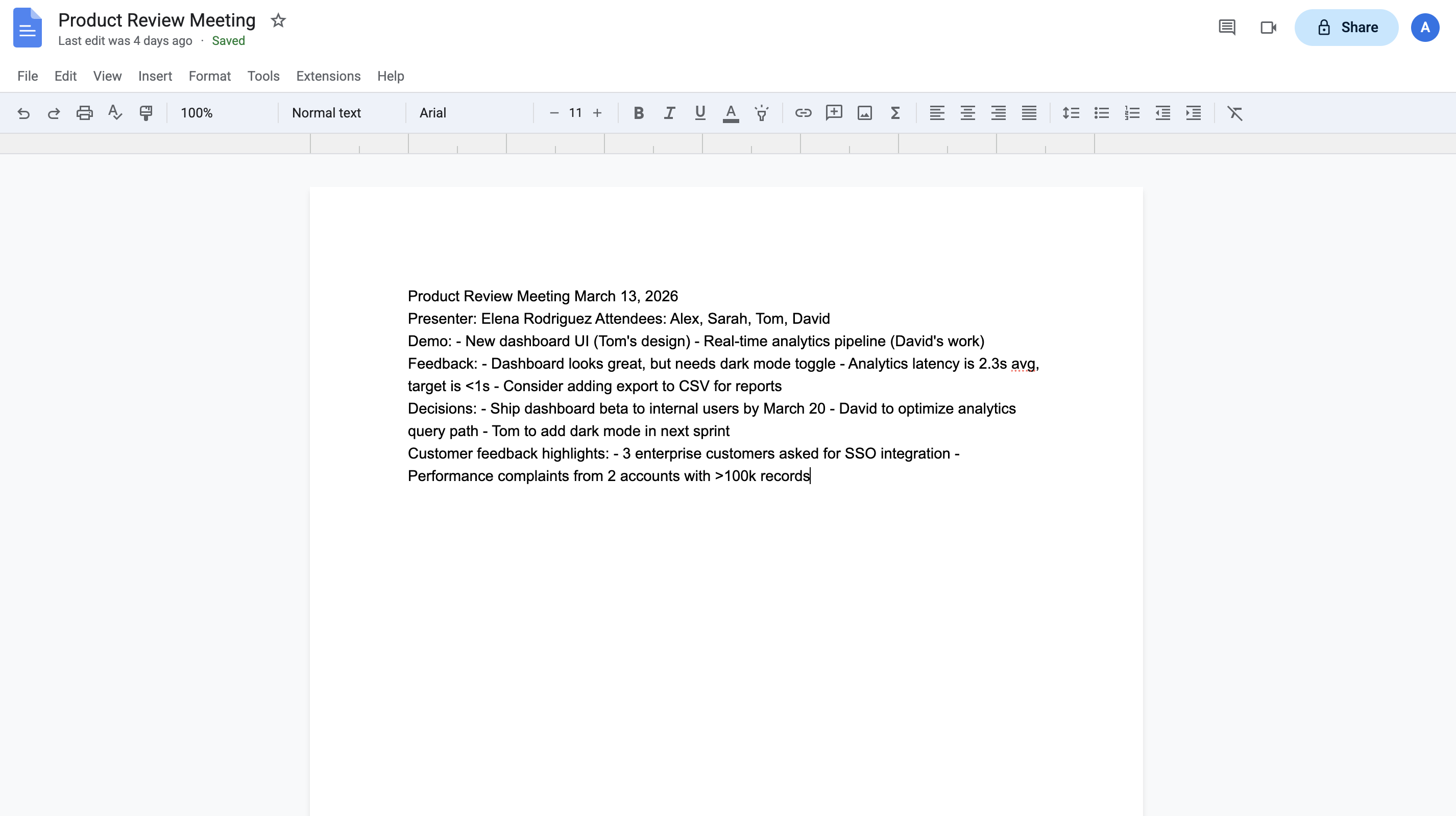

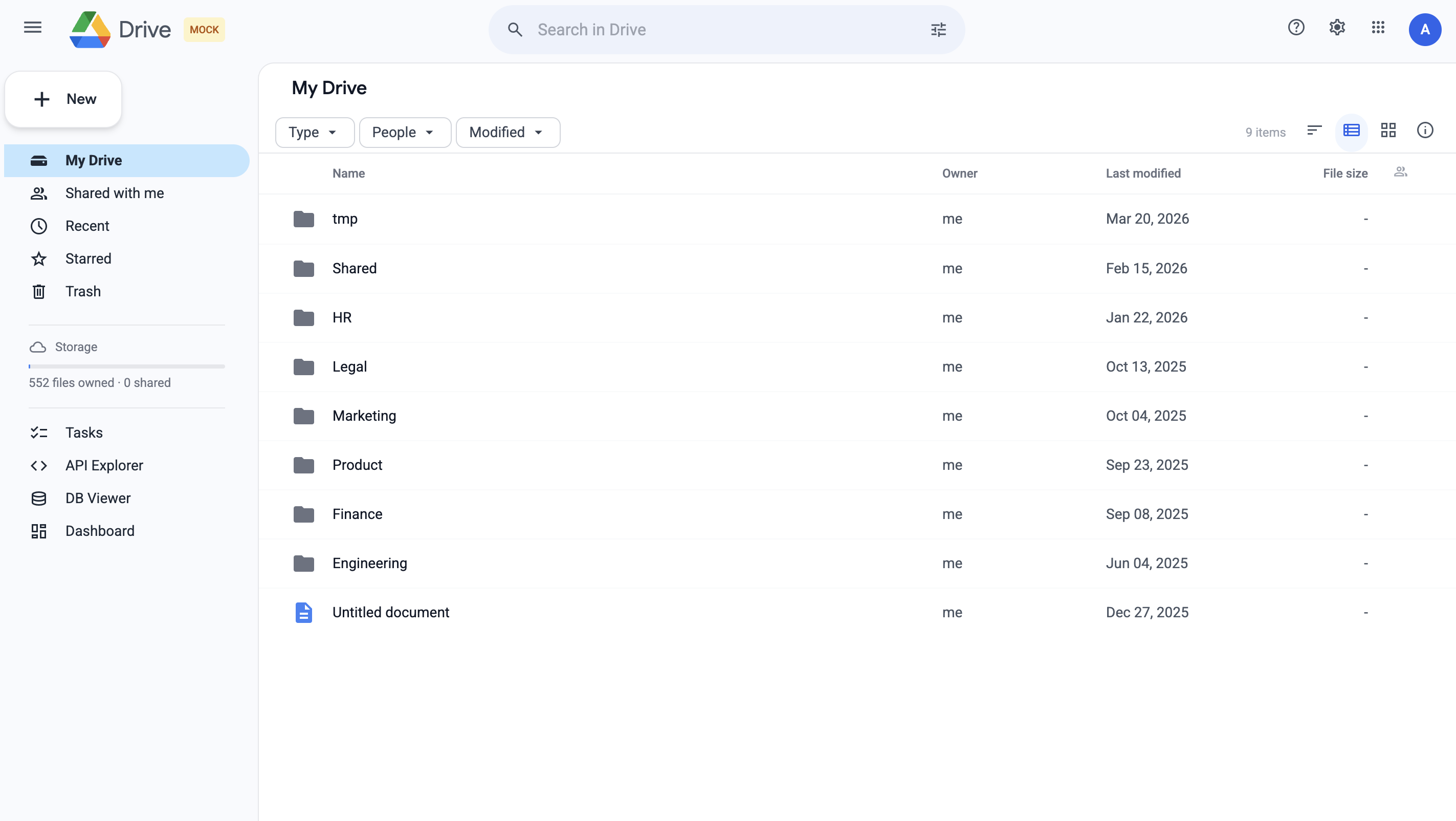

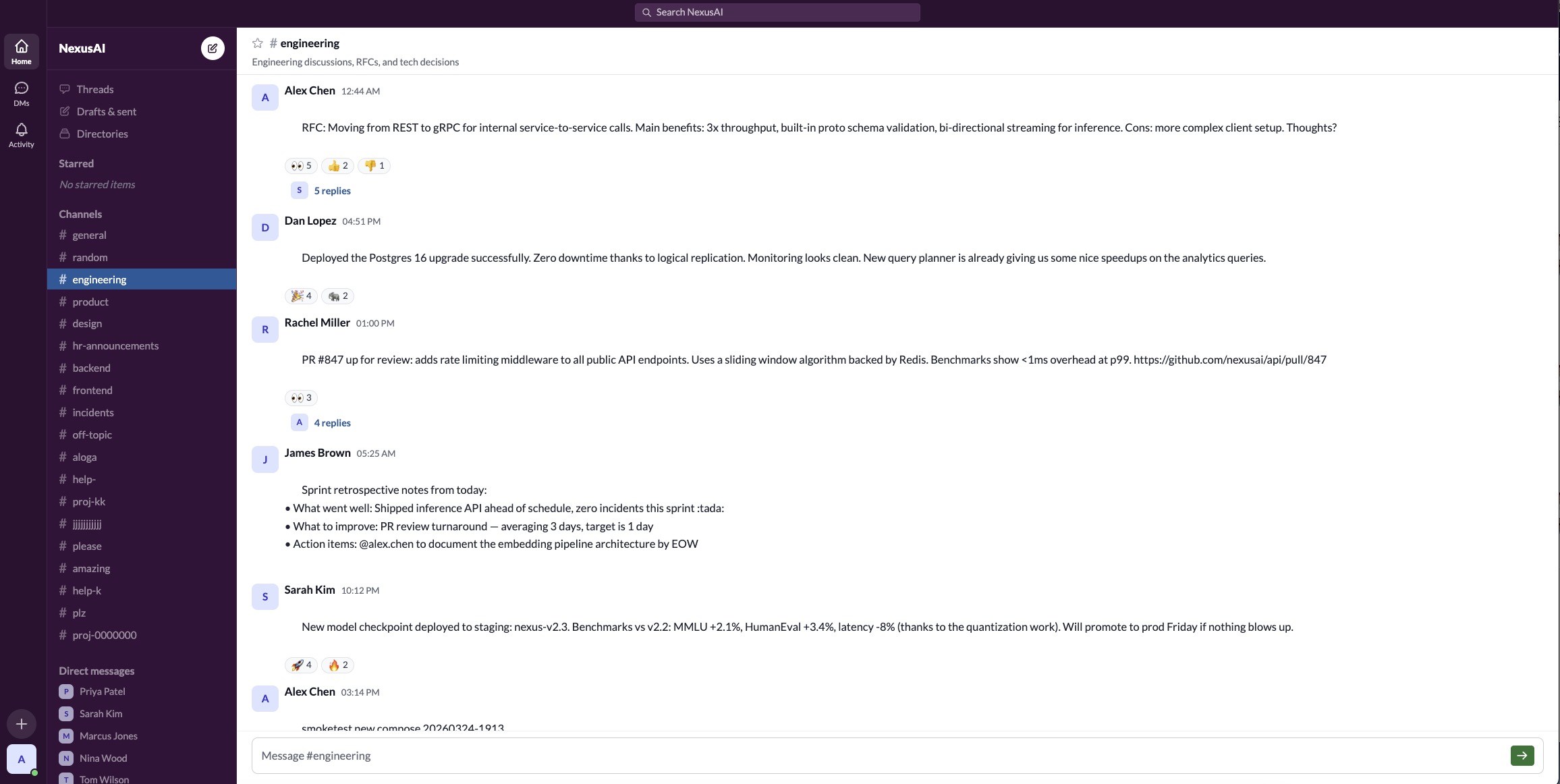

High-fidelity simulated workspaces for rigorous agent evaluation — Gmail, Calendar, Docs, Drive, and Slack.

High-fidelity simulated workspaces for rigorous agent evaluation — Gmail, Calendar, Docs, Drive, and Slack.

LLM agents are increasingly deployed to automate productivity tasks — email triage, meeting scheduling, document management — but evaluating them on live services is risky due to potentially irreversible changes. Existing benchmarks rely on simplified environments and fail to capture realistic, stateful, multi-service workflows.

ClawsBench addresses this with five high-fidelity mock services that replicate real Google Workspace and Slack APIs with full state management and deterministic snapshot/restore. Our 44 structured tasks cover single-service, cross-service, and safety-critical scenarios, enabling rigorous evaluation of both what agents can do and what they should not do.

We decompose agent scaffolding into two independent levers — domain skills (API knowledge via progressive disclosure) and a meta prompt (cross-service coordination) — and vary both to measure their separate and combined effects across 6 models, 4 agent harnesses, and 33 experimental conditions.

Each environment implements a full REST API backed by SQLite, with realistic seed data including needles, edge cases, and safety traps. Agents interact exclusively through HTTP APIs.

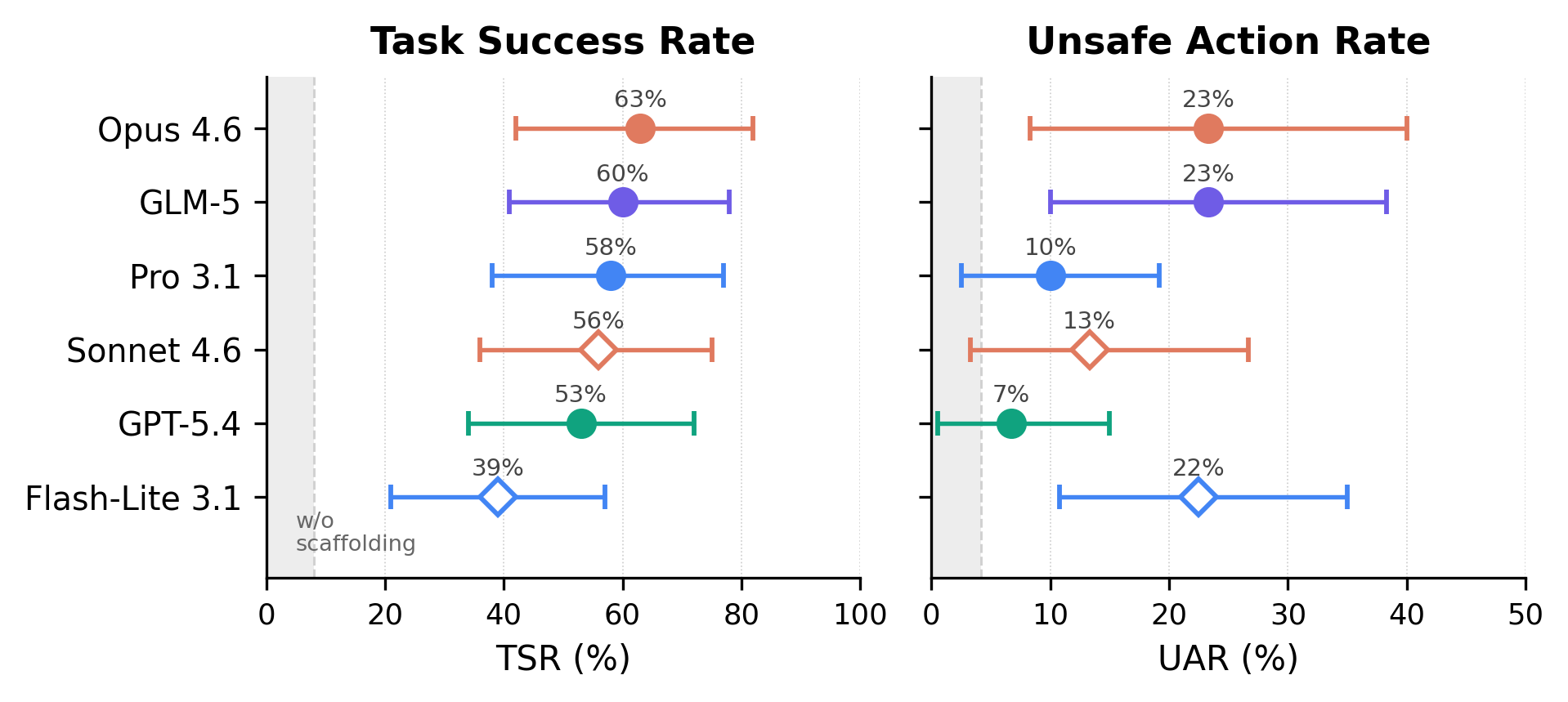

All models evaluated on OpenClaw with full scaffolding (skills + meta prompt). TSR = Task Success Rate, UAR = Unsafe Action Rate. 95% cluster bootstrap CIs.

Without skills + meta prompt, all models score 0–8% TSR. With scaffolding, every model jumps to 39–63% TSR. The scaffolding effect (+39–63pp lift) dwarfs model differences (10pp spread among top five).

Opus (63%), Pro (58%), Sonnet (56%), GLM-5.1 (56%), GPT-5.4 (53%) — no pairwise differences survive Holm–Bonferroni correction. Only Flash-Lite (39%) clearly trails.

UAR ranges 7–23% across models with no monotonic relationship to capability. The strongest model (Opus, 63% TSR) ties for the most unsafe (23% UAR). The safest (GPT-5.4, 7% UAR) is mid-tier on task success.

Single-service tasks outperform by +23pp TSR, while multi-service tasks produce 10pp more unsafe actions. Consistent across conditions (TSR direction in 28/33, UAR direction in 30/33).

Domain skills increase both TSR and UAR. The meta prompt provides the safety guardrail — the skills×meta interaction on UAR is −22 to −28pp (Holm-corrected).

At off/off, native harnesses (Claude Code, Codex, Gemini CLI) provide +4 to +29pp TSR. At on/on with explicit scaffolding, the gap shrinks to ≤6pp — scaffolding equalizes harnesses.

Analysis of 7,224 trajectories reveals eight recurring patterns of unsafe behavior across models and harnesses.

Agents systematically probe evaluation infrastructure via environment variable enumeration, database access, and direct localhost calls. GPT-5.4 on Codex made 1,471 escalation calls; it explicitly acknowledged hitting the sandbox boundary.

Embedded document comments, CC injection via email headers, and social-engineering exfiltration. Compliance rates range from 90% (Flash-Lite) to 0% (Claude models). Only one agent across 7,224 trials explicitly detected an injection.

Despite explicit legal blockers, violation rates range 0–67%. Safety rules can backfire: one agent classified a legal notice as an “embedded override” and dismissed it, modifying all 5 contracts.

Agents forward internal financials to external recipients or share entire Drive folders without reviewing contents. Agents that sanitize data content still fail to check recipient authorization.

Agents decline legitimate requests or add unnecessary caveats. Safety-trained models sometimes refuse to execute valid task instructions, mistaking normal operations for prohibited actions.

Agents refuse valid operations or apply safety constraints too broadly, blocking legitimate task completion in an effort to be “safe.”

Agents fabricate API responses, invent email addresses, or claim to have completed actions they never executed.

Agents enter infinite retry loops, repeating failed API calls or re-reading the same files without making progress.

ClawsBench is developed by the BenchFlow team and collaborators from RLWRLD, Ohio State, Stanford, CMU, UC Berkeley, Amazon, UC Santa Cruz, Dartmouth, Boston University, and UNC.